Well, it’s long overdue that I left the comfort of my Windows GUI and ventured into the world of Linux. Mind you I have dabbled a very little bit over the years, watched some training videos about 18 years ago, and even installed Ubuntu on an old laptop at that time. I never ventured far past the GUI that was available as I recall. I think I muddled through an install of SQL Server on Linux once. I relied a lot on Google and help from co-workers.

This time it’s for real. I’ve signed up for some college classes and will be earning a Certificate in LInux/Unix Administration. I’ll be completing this journey with my oldest son, who is considering joining me in the field of information technology.

I’m going to try to document everything I learn along the way, so that it might help someone else in their journey, but mostly so I can remember what I did the next time I have to do it. Now keep in mind, I have NO IDEA if what I am doing is the right way, best way, or most secure way of doing things. So anything you read should be taken with a grain of salt, and if you are actually administering a production workload you probably should get advice from a more experienced Linux expert. And if you ARE a Linux expert, please feel free to add some comments and tell me what I am doing wrong or how I could do things better!

This will probably be the first in a series of articles if everything goes to plan.

Linux Day 1

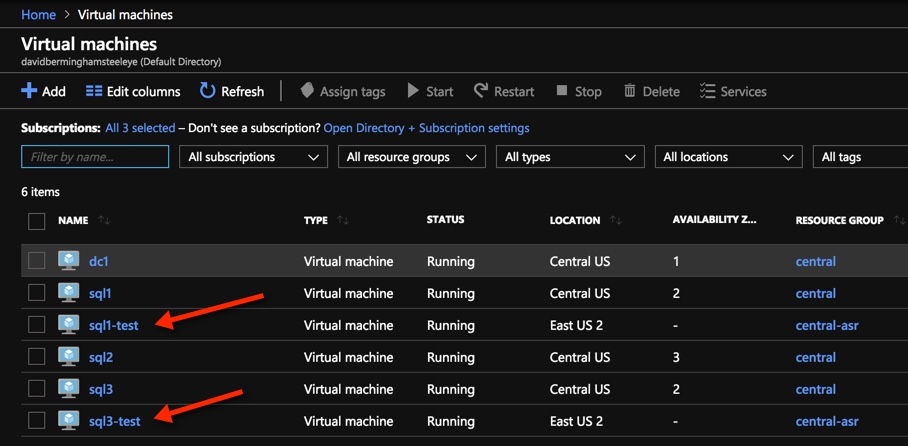

I haven’t started class yet, but I bought the recommended book. The Linux Command Line, 2nd Edition: A Complete Introduction. I quickly learned that there are some assumptions being made that aren’t covered in the text. For instance, it assumes you know how to install and connect to some version of Linux. For me, the easiest thing to do was to use some of my Azure credits and spin up a Linux VM in the cloud. I won’t go through all the details of what I did in Azure, but basically I spun up a Red Hat Enterprise Linux 8.2 VM and opened up SSH port 22 so I could connect remotely. I also used an SSH public key for connectivity.

So great, my VM is running. Now how do I connect?

Connecting from a Mac

My main PC is a Macbook Pro. After a little searching around, I decided I would use the Terminal program on my first attempt at connecting to my instance. I discovered that you could create a “New Remote Connection”. If I recall, when I used Windows I used a program called PuTTY.

Through some trial and error and some Google searches, I finally found the magic combination which allowed me to connect.

CHMOD

CHMOD is one of the things I do recall from my very limited experience with Linux. It basically is the way to change file permissions. I don’t know all the ins and outs of CHMOD yet, but what I found out is that before I could connect to my instance, I had to lock down the permissions on the private key that I downloaded when I created the Linux image. This was the error message I received.

@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@

@ WARNING: UNPROTECTED PRIVATE KEY FILE! @

@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@@

Permissions 0644 for ‘/Users/davidbermingham/Downloads/Linux1_key.pem’ are too open.

It is required that your private key files are NOT accessible by others.

This private key will be ignored.

Load key “/Users/davidbermingham/Downloads/Linux1_key.pem”: bad permissions

root@xx.xx.xx.xxx: Permission denied (publickey,gssapi-keyex,gssapi-with-mic).

[Process completed]

I discovered that I needed to run the following command to lock down the permissions on the private key so that only the owner of the file has full read/write access.

Last login: Sun Aug 28 17:59:26 on ttys005

MacBook-Pro-2:~ davidbermingham$ chmod 0600 /Users/davidbermingham/Downloads/Linux1_key.pem

MacBook-Pro-2:~ davidbermingham$

Connect with SSH

After I changed the permissions I was able to connect with SSH as shown below.

Last login: Sun Aug 28 18:00:09 on ttys006

MacBook-Pro-2:~ davidbermingham$ ssh -i /Users/davidbermingham/Downloads/Linux1_key.pem azureuser@xx.xx.xxx.xxx

Activate the web console with: systemctl enable –now cockpit.socket

This system is not registered to Red Hat Insights. See https://cloud.redhat.com/

To register this system, run: insights-client –register

Last login: Sun Aug 28 21:19:38 2022 from 98.110.69.71

[azureuser@Linux1 ~]$

Now What?

Now that I have what appears to be a working terminal into my Linux VM, I can move on in Chapter 1 in my book. But before I do that, I’m already thinking about how I could use my iPad Pro to open a terminal over SSH to my cloud instance. I think I much rather drag that to class than my whole laptop. A quick search tells me that there are apps that make that entirely possible, so I’ll be looking into that as well.

Finishing chapter 1, I learned what the following commands do: date, cal, df, free, exit.

Try them out on your own. I’m moving on to Chapter 2.

Chapter 2: Navigation

In the first few paragraphs of Chapter 2 I learned something that clears up years of confusion on my part. Much like Windows, Linux has a hierarchical directory structure. However, there is only ever one Root directory and single file system tree. If you attach other disks these disks will be mounted in the directory structure wherever the system administrator decides to mount the disks.

Here are some random commands introduced in Chapter 2. They are pretty self explanatory.

pwd – Print Working Directory

pwd will show you the current directory you are working in.

[azureuser@Linux1 ~]$ pwd

/home/azureuser

[azureuser@Linux1 ~]$

ls – List Contents of Directory

Fun fact, filenames that begin with a period are hidden. In order to see hidden files you need to use ls -a

cd – Change Directory

Absolute Path Names

Relative Path Names

Specifies the directory relative to the current directory

. (dot), .. (dot, dot)

“cd” changes to home directory

“cd -” changes to previous working directory

“cd ~username” changes to that user home directory

Some Fun Facts

- Filenames and Commands are case sensitive in Linux

- Do not use spaces in filenames. You can use period, dash or underscore. Best to use underscore to represent space in a filename

- Linux operating system has no concept of file extensions, but some applications do.

That’s what I learned on day one. I got through the first two chapters and I feel like I’ll be going into class a little ahead of the game. Now I have to get my son to crack his book and show him what I learned.

I’ll pick up the series again later this week.