Microsoft recently released a patch that allows you to specify whether or not a cluster node can vote in in a majority quorum model. This is particularly useful in a multisite cluster configuration that consists of an even number of nodes.

http://support.microsoft.com/kb/2494036

Consider the following…

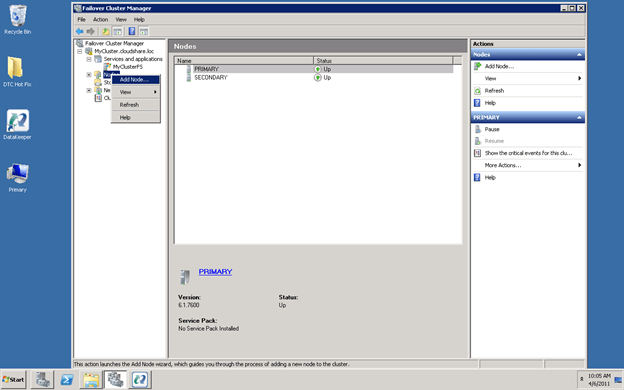

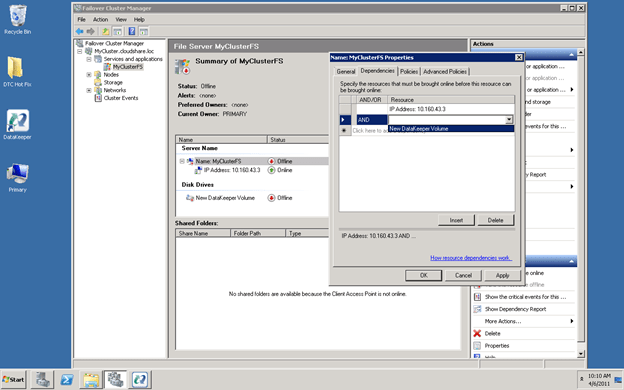

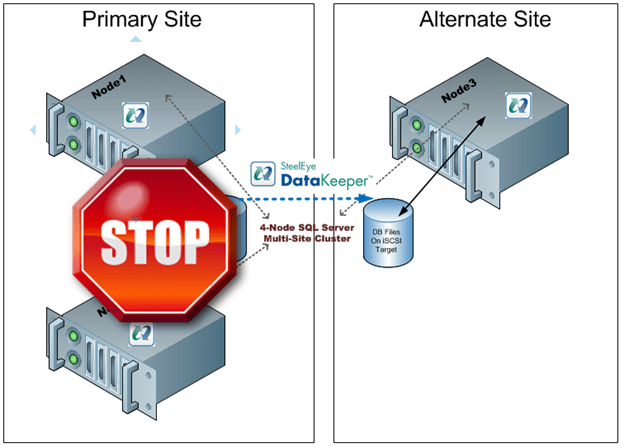

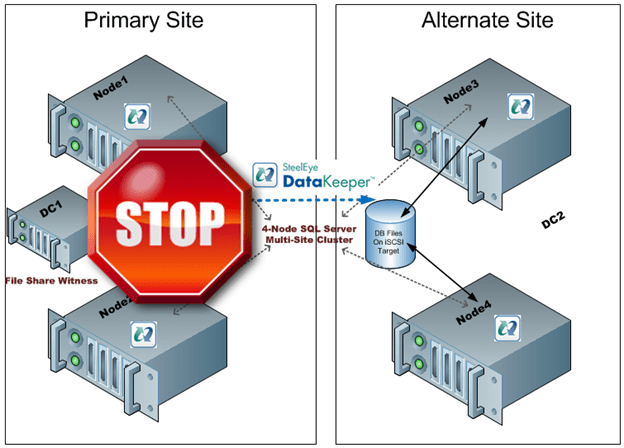

I have a two node cluster in a local site high availability and I wish to extend it to a 3rd location and add a single node for disaster recovery. Sound like a great plan as a multisite cluster is just about the most robust DR plan you can implement. However, you will not be able to take advantage of one of the best features of a multisite cluster – automatic recovery in the event of a site loss. If you were to lose your primary site the DR site only contains one cluster node (see Figure 1). This is just one vote out of three in the cluster so a majority cannot be obtained and Node3 will not come online automatically. The only way to make Node3 come online is to force the quorum online, which kind of defeats the purpose of multisite cluster by requiring human intervention for a failover to happen.

Figure 1 – In a typical 3 node multisite cluster if you lose the primary site the DR site cannot obtain majority so failover never occurs.

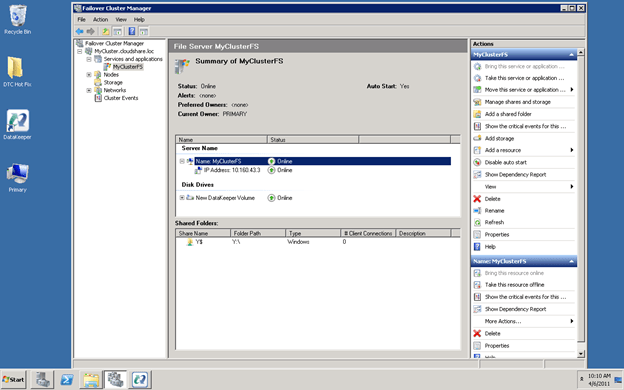

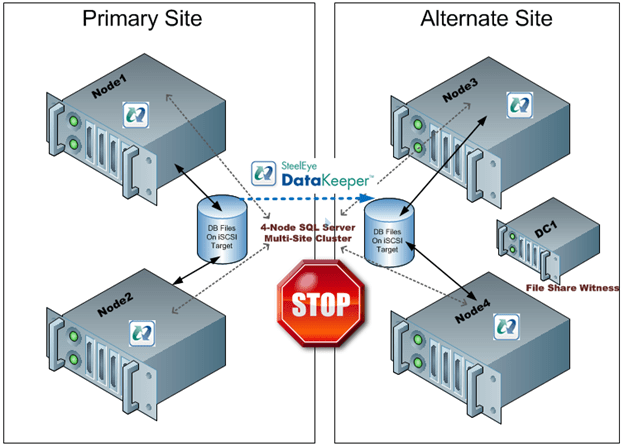

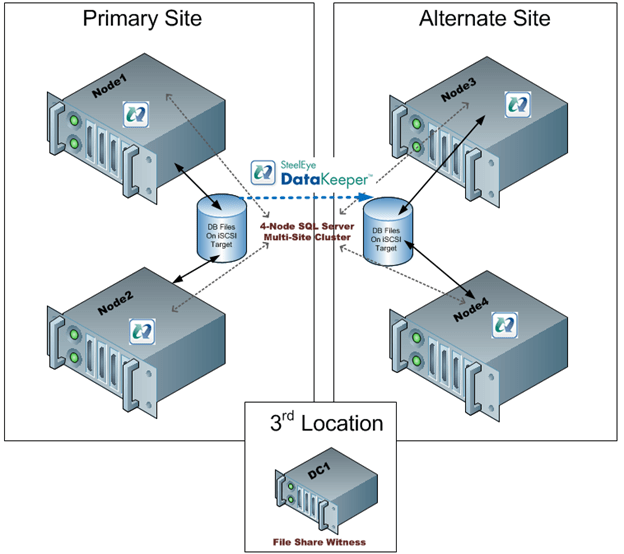

The only “safe” way to have automatic failover in a multisite cluster is to have an equal number of nodes in each site and to have a file share witness in a 3rd location with connectivity back to both the primary site and the DR site. This concept is a little difficult to grasp at first, so let me attempt to explain through illustrations.

Figure 2- With an even number of nodes in both locations and the file share witness in the primary site a loss of the primary site would not result in a failover as the Alternate Site would only have 2 out of 5 votes, not a majority.

Figure 3 – If the file share witness was moved to the Alternate Site a failure of the WAN would cause a false failover as the Alternate Site would form a majority and come online.

Figure 4 – with the file share witness in a 3rd location failover will occur if the Primary Site is lost and false failovers are avoided in the case of connectivity failure between the Primary and Alternate Site.

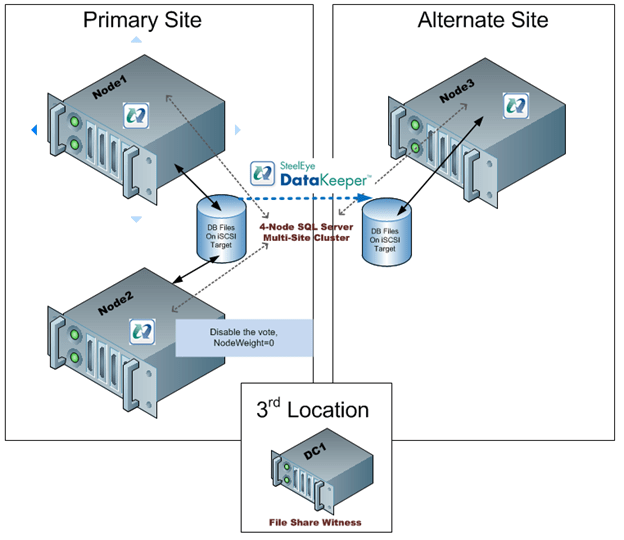

As you can see, figure 4 represents the only reasonable configuration which supports automatic failover. However, this assumes that there are an equal number of nodes in each location. If you are stuck with the original 3-node configuration you are stuck as adding a file share witness does not help as you can never achieve a majority in the alternate site…until today! Microsoft release a patch that basically allows you to specify whether or not a node gets to vote or not. So what this means is you can build a 3-node cluster as illustrated in Figure 1, yet take advantage a file share witness in a 3rd location as illustrated in Figure 4. By simply telling one of the nodes in the Primary Site to note vote in the cluster you will allow the Alternate Site to form a majority with the file share witness and come online. Assuming connectivity to your 3rd location and Alternate Site is relatively reliable there really is no downside to the configuration shown in Figure 5.

Figure 5 – by disabling the vote on Node2 you can deploy a 3-node multisite cluster with a file share witness and safely support automatic failover to the DR site. The same concept can be applied to any cluster with an odd number of nodes.

While this is a great solution, you still need that 3rd location for the file share witness. If you don’t have that 3rd location you will just have to settle for a manual switchover and keep the file share witness in the primary site if you have an even number of nodes.

The PreventQuorum switch is also included as part of this hotfix which will also be of interest to people deploying multisite clusters. Well explore that option in a future article.

Get the hot fix here…

A hotfix is available to let you configure a cluster node that does not have quorum votes in Windows Server 2008 and in Windows Server 2008 R2